Deep learning has revolutionized artificial intelligence, enabling breakthroughs that seemed impossible just a decade ago. From computers that can recognize faces with superhuman accuracy to systems that can engage in sophisticated conversations, deep learning powers many of the most impressive AI applications today. Understanding how neural networks work provides insight into both their remarkable capabilities and their limitations.

The Biological Inspiration

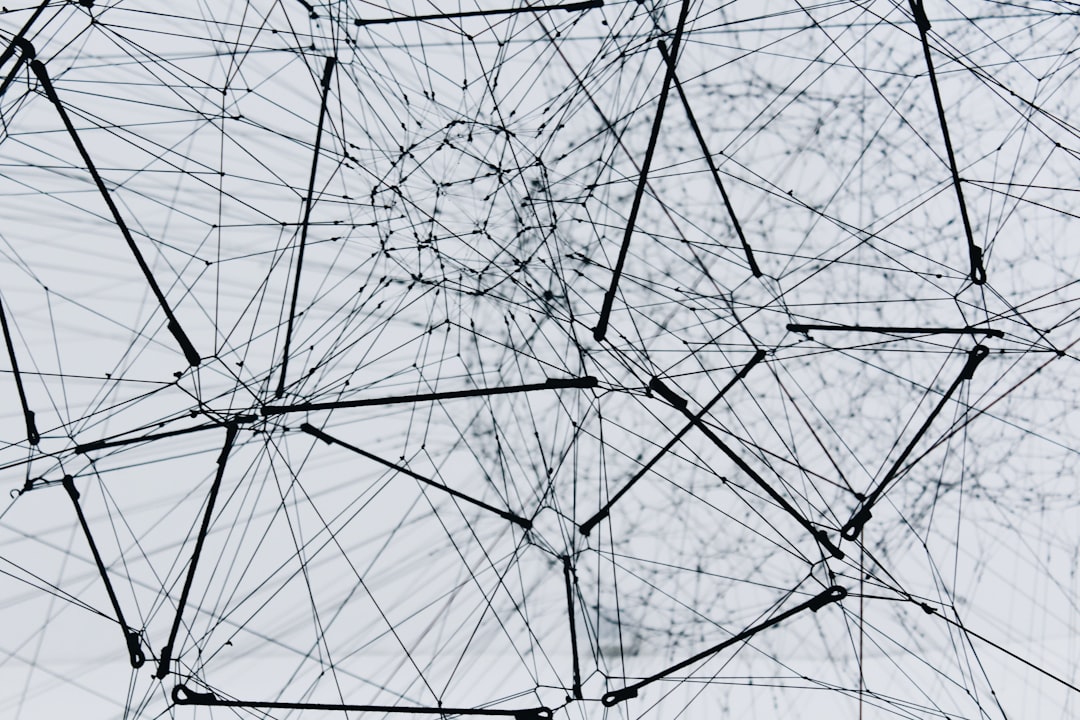

Neural networks draw inspiration from the human brain, though they're vastly simplified compared to biological neural systems. The brain contains billions of neurons, each connected to thousands of others, processing information through electrical and chemical signals. Artificial neural networks attempt to mimic this structure using mathematical models, creating systems that can learn complex patterns from data.

Each artificial neuron receives inputs, processes them using mathematical functions, and produces an output. These neurons are organized in layers, with connections between them carrying weighted signals. During learning, the network adjusts these weights to improve its performance on specific tasks. While inspired by biology, modern neural networks are ultimately mathematical constructs optimized for specific computational tasks rather than faithful recreations of biological systems.

Neural Network Architecture

A typical neural network consists of an input layer that receives data, one or more hidden layers that process information, and an output layer that produces predictions. The term "deep" in deep learning refers to networks with many hidden layers, sometimes dozens or even hundreds. These deep architectures enable the network to learn hierarchical representations, with early layers detecting simple patterns and later layers combining them into increasingly complex concepts.

Convolutional neural networks, specialized for processing grid-like data such as images, use layers that apply filters across the input, detecting local patterns like edges, textures, and eventually complex objects. This architecture has proven remarkably effective for computer vision tasks, enabling applications from facial recognition to medical image analysis.

Recurrent neural networks process sequential data by maintaining internal state, allowing them to handle variable-length inputs and capture temporal dependencies. These networks excel at tasks involving sequences, such as language translation, speech recognition, and time series prediction. Modern variants like transformers have pushed the boundaries of what's possible in natural language processing.

The Learning Process

Neural networks learn through a process called backpropagation, which iteratively adjusts the network's weights to minimize the difference between predicted and actual outputs. The process begins with forward propagation, where input data flows through the network to produce predictions. These predictions are compared to correct answers using a loss function, which quantifies how wrong the network's outputs are.

The network then uses gradient descent to determine how to adjust each weight to reduce the loss. This involves calculating the gradient of the loss function with respect to each weight, indicating the direction and magnitude of change needed. These gradients propagate backward through the network, hence the name backpropagation, allowing every weight to be updated based on its contribution to the error.

This process repeats for thousands or millions of examples, with the network gradually improving its performance. The learning rate, which determines how much weights change with each update, must be carefully chosen—too high and the network may overshoot optimal values, too low and learning becomes impractically slow.

Training Challenges

Training deep neural networks presents several technical challenges. The vanishing gradient problem occurs when gradients become extremely small as they propagate backward through many layers, essentially preventing early layers from learning effectively. Researchers have developed various solutions, including specialized activation functions and architectural innovations like residual connections, which allow gradients to flow more easily through deep networks.

Overfitting remains a persistent concern with neural networks, particularly deep ones with millions of parameters. These networks can memorize training data rather than learning generalizable patterns. Techniques like dropout, which randomly deactivates neurons during training, and data augmentation, which creates variations of training examples, help prevent overfitting and improve generalization.

Computational requirements for training deep networks can be substantial, often requiring specialized hardware like GPUs or TPUs. A single state-of-the-art language model might require weeks of training on clusters of high-end processors, consuming significant energy. This has sparked research into more efficient training methods and raised questions about the environmental impact of large-scale AI development.

Remarkable Applications

Computer vision has been transformed by deep learning. Image classification systems can now identify thousands of object categories with accuracy exceeding human performance on many benchmarks. Object detection networks locate and classify multiple objects within images, enabling applications from autonomous driving to security systems. Image segmentation algorithms partition images into meaningful regions, useful in medical imaging and satellite image analysis.

Natural language processing has seen dramatic advances thanks to deep learning. Modern language models can generate coherent, contextually appropriate text, translate between languages with near-human quality, and answer questions by understanding complex passages. These capabilities power virtual assistants, translation services, and content creation tools used by millions of people daily.

Generative models create new content by learning patterns from training data. Generative adversarial networks can produce photorealistic images that never existed, while variational autoencoders enable creative applications from style transfer to data compression. These technologies are changing creative industries, though they also raise important questions about authenticity and intellectual property.

Limitations and Considerations

Despite their impressive capabilities, neural networks have important limitations. They typically require large amounts of labeled training data, which can be expensive and time-consuming to collect. Transfer learning, where models pretrained on large datasets are adapted to new tasks with less data, partially addresses this challenge but doesn't eliminate it entirely.

Neural networks are often criticized as "black boxes" whose decision-making processes are opaque. While they can achieve high accuracy, understanding why they make specific predictions remains challenging. This lack of interpretability is particularly concerning in high-stakes applications like healthcare or criminal justice, where understanding the reasoning behind decisions is crucial.

Adversarial examples demonstrate that neural networks can be fooled by carefully crafted inputs that would be obvious to humans. Small, imperceptible perturbations to an image can cause a network to misclassify it dramatically. This vulnerability has implications for security-critical applications and highlights fundamental differences between artificial and human intelligence.

Future Directions

Research continues to push the boundaries of what's possible with neural networks. Attention mechanisms and transformer architectures have enabled dramatic improvements in natural language processing and are now being applied to computer vision and other domains. These architectures can process long sequences more effectively and capture complex dependencies in data.

Few-shot and zero-shot learning aim to create models that can learn new tasks from minimal examples, more closely mimicking human learning capabilities. Meta-learning approaches train networks to learn how to learn, adapting quickly to new tasks. These directions could reduce the data requirements that currently limit neural network applications.

Neuromorphic computing explores hardware architectures more closely inspired by biological brains, potentially offering dramatic improvements in energy efficiency. Quantum machine learning investigates whether quantum computers could accelerate certain neural network operations, though practical applications remain largely theoretical.

Conclusion

Deep learning and neural networks represent one of the most significant advances in artificial intelligence, enabling capabilities that seemed like science fiction just years ago. Understanding their architecture, learning processes, and limitations provides essential context for anyone working with AI technologies. While challenges remain, particularly around interpretability, data requirements, and computational costs, neural networks continue to expand the boundaries of what machines can do.

As these technologies mature and become more accessible, their impact will only grow. Whether you're building AI applications, making decisions about adopting AI tools, or simply trying to understand the technology shaping our world, a solid grasp of neural network fundamentals is increasingly valuable. The field evolves rapidly, but the core concepts covered here provide a foundation for continued learning and exploration.